The Questions Every AI Leader Should Be Asking About Production Agents

· Paul Chada · 4 min read

I went to Cal State Fullerton to talk about deploying AI agents. The students asked the questions that too many practitioners forget: What about privacy? What about security? What about ethics?

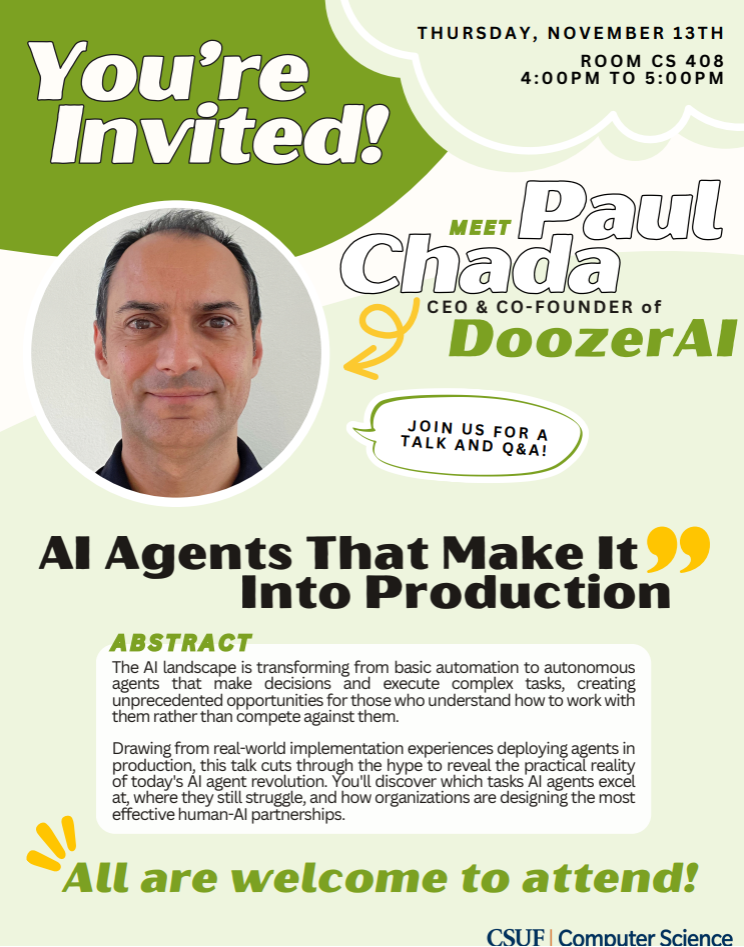

Yesterday I stood in front of 80+ computer science students at Cal State Fullerton to talk about 'AI Agents That Make It Into Production.' I came prepared to discuss deployment strategies, integration patterns, and our real-world case studies from DoozerAI. The students had other plans. They wanted to talk about the stuff that matters more than speed-to-deployment metrics.

First question out of the gate: 'How do you handle privacy when AI agents have access to sensitive business data?' Not 'how fast can you deploy' or 'what's your ROI.' Privacy. Security. Data governance. The foundational questions that too often get handwaved away in the rush to ship.

These students are asking the questions that boardrooms should be asking. They're thinking about ethics before they write their first line of production code.

What struck me most: these aren't just future developers - they're future leaders who are grappling with how we work alongside autonomous agents rather than compete against them. They understand that technical capability without ethical framework is just expensive chaos.

We spent an hour discussing real production scenarios from DoozerAI deployments. When I mentioned our finance automation case (90% time savings, 99.7% accuracy), the questions weren't about the metrics. They were about: What happens to the people whose jobs changed? How do you ensure the AI doesn't perpetuate biases in approval workflows? What's your rollback strategy when things go wrong?

One student asked: 'How do you prevent AI agents from becoming black boxes that nobody understands?' Another: 'What's your responsibility when an AI agent makes a mistake that affects real people?' These are the conversations we need to be having everywhere, not just in CS classrooms.

Massive thanks to Mine Hagen, Anand Panangadan, Kiran George, and the entire CSUF CS faculty for the invitation. And to the students - Riya Jain, Mario Pinzon, Christian Carrillo, Owen Rotenberg, Winnie Han, Khadar Chittor, Yash Bagal, Hardavi Thoria, Bryan Alarcon, and the many others who engaged with thoughtful questions and shared their own insights.

The next generation is approaching AI with both technical rigor and human wisdom. They're not choosing between innovation and responsibility - they're insisting on both. That combination is exactly what we need.

To the CSUF CS community: Thank you for the reminder that building AI that makes it into production isn't just about what works - it's about what we should build, how we should build it, and who it should serve. Keep asking the hard questions.

Looking forward to seeing what you all build next. 🚀